The following is an interview with Cesar Turturro, an accomplished film director who received the prestigious 2022 Epic MegaGrant in recognition of his exceptional work on “Invasion 2040.”

Q: Hi Cesar, please tell us a little bit about yourself.

Hi, let me start from the beginning. Back in 2004, I embarked on my filmmaking journey with my inaugural war feature film, delving into the 1982 Malvinas War. For this project, I crafted VFX close-ups, seamlessly blending real images with 3D animation. Subsequently, I directed my maiden documentary on the same subject for The History Channel, featuring over 60 minutes of compelling air combat animations.

Continuing my creative exploration, I ventured into the realm of fan films, garnering some worldwide attention with a production centered around Robotech. Notably, the film garnered substantial attention in Japan, accumulating over 200,000 views within a single day.

Q: How did you come up with the idea of creating the science fiction film “Nick 2040”?

Building on my previous experiences, I took a significant leap into creating my own science fiction realm named “Invasion 2040”. This transformative endeavor has spanned over seven years. Along the way, I secured an Epic MegaGrant, and our project caught the attention of Reallusion, leading to our participation in their Pitch & Produce program.

Taking yet another stride forward, I elevated the production to a cinematic level with “Nick 2040”, shooting in 6K using RED cameras. The post-production was meticulously rendered in 4K, thereby completing the trilogy of short films. This final installment serves as a proof of concept for an ambitious feature film, currently in the pre-production stage.

Achieving this is contingent upon having a significant portion of the VFX groundwork completed before filming. Presently, I am actively engaged in this preparatory phase — every scene, character, animation, and VFX test crafted for the short film is seamlessly integrated into the overarching feature film. This dual role enables me to serve as both co-director and VFX director.

Q: Please describe your workflow with Reallusion software.

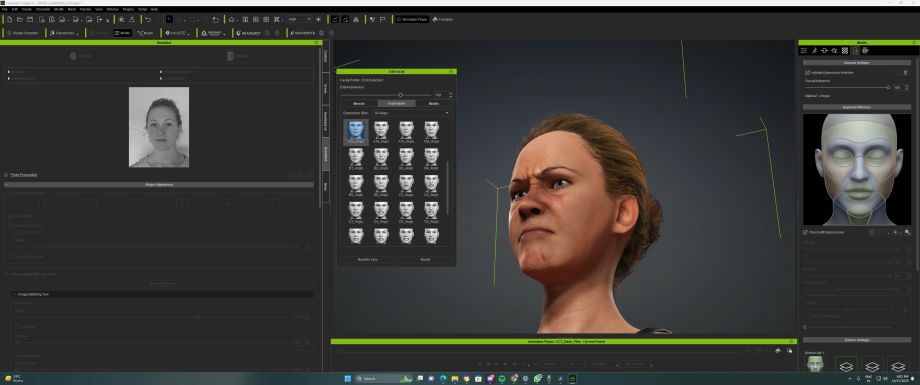

Phase 1 – Create realistic characters with Headshot and Dynamic Wrinkles

I begin by initiating a facial scan of the robot using Reality Scan. Simultaneously, utilize Headshot to generate a face based on a photo. In parallel, I employ ZBrush to amalgamate both models, creating a mesh that closely resembles the target appearance and utilizing the mesh and the texture generated in iClone via the Headshot plug-in in Character Creator. Once finalized, I transfer all components to Unreal Engine to generate the robot’s face within a Metahuman framework. Additionally, I conducted a scan of the clothing to enhance the overall realism in the final rendering.

I meticulously crafted the antagonist, a towering giant, by intricately detailing the facial features. Drawing inspiration from the expressions of Metahumans, I ventured beyond conventional methods. Employing Blender, I seamlessly merged an AccuRIG body with a Metahuman face, yielding a remarkably satisfying outcome.

Our breakthrough involved generating facial animations through Dynamic Wrinkles. This allowed us to achieve lifelike expressions on both the protagonist’s face and the characters featured in the invasion scenes.

Certain scenes necessitated the recreation of the real actress’s face, which was impractical using conventional shooting methods. Leveraging the Headshot plug-in, I scanned the actress’s face and refined the mesh using ZBrush in conjunction with CC4. Subsequently, I animated it in iClone 8 and seamlessly transferred all data to Unreal Engine via Live Link, producing an astounding result.

Harnessing the advanced capabilities of CC4’s custom character Dynamic Wrinkles and employing facial mocap, I effortlessly executed intricate facial expressions within mere minutes.

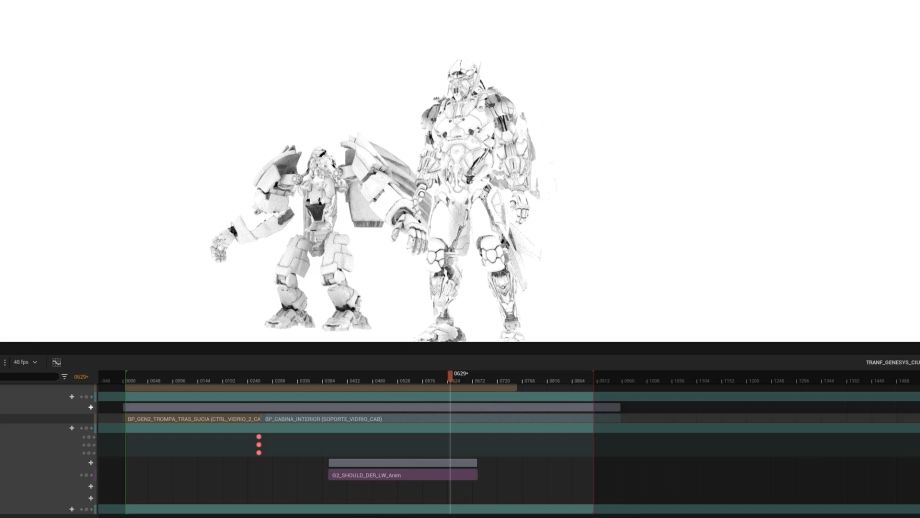

Phase 2 – Animate the fighting scene with iClone and ActorCore

The integration of iClone played a pivotal role in refining the animation of the transformation in “Invasion 2040”. This was particularly crucial as the character seamlessly rises, and places hands on the ground, while simultaneously undergoing a dynamic metamorphosis into a robotic form.

The invasion scene in “Nick 2040” was meticulously crafted in Unreal Engine, featuring a blend of characters sourced from different platforms — some from Metahumans and others from iClone. Leveraging the practical and easily processable Actorcore scans, obtained through iClone’s Live Link, proved ideal for seamlessly incorporating various animations from the “Run For Your Life” pack, perfectly complementing the intensity of the invasion shots. To achieve a cohesive scene, I cloned the iClone animations onto the skeletons of the Metahuman characters in Unreal.

The climactic final combat between the robot and the Jumper was a two-part process. Initially, I choreographed the movements of both characters through Mocap, personally executing the choreography and refining the contacts for impactful hits within iClone. These animations were then transmitted via Live Link to Unreal for rendering. Simultaneously, the fight scene featured additional sequences sourced from fight sequences available on Reallusion’s Marketplace, enhancing the overall dynamic and intensity of the confrontation.

Reallusion and iClone 8 have been instrumental in empowering me to create intricate animated scenes, featuring epic battles between giants and robots. These scenes, serving as the essence of my films, are brought to life through the robust features integrated into my workflow pipeline. The capabilities offered by these tools, particularly in the realm of CG and animation, play a pivotal role in realizing the vision and complexity inherent in my cinematic creations.

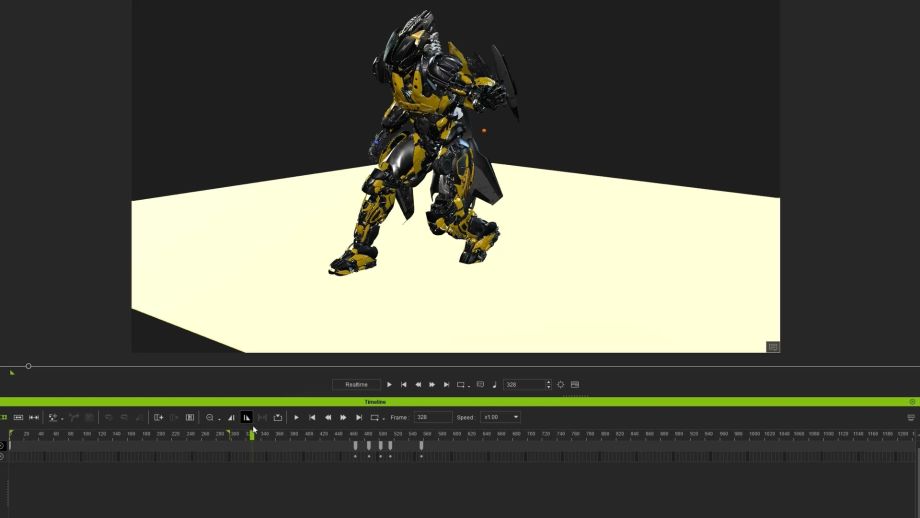

Phase 3 – Immediately preview the results with Unreal Live Link

The Live Link enhancement stands out as truly remarkable. The process of sending animations to Unreal is both seamless and stable. Correcting any movement is a straightforward task — I make adjustments in iClone, ensuring I adhere to the specified start and end times on the timeline. Subsequently, through the Unreal Sequencer, I effortlessly replaced the animation, completing the process with just a few clicks. In a matter of moments, the updated animation is prepared and ready for rendering.

The timecode sync render has also revolutionized my workflow. Now, I can seamlessly transfer objects to iClone, animate characters, and use those objects as references. The remarkable part is the speed at which I can send the animation to Unreal, taking mere seconds. This streamlined process has made animation exceptionally simple and fast, marking a significant improvement in my efficiency and creative capabilities.

Q: Why choose Reallusion?

We are currently finalizing the storyboard, shooting, budget, and VFX plan for “Nick 2040” — an essential undertaking that forms the backbone of our feature film. In a compact animation studio, where tasks are inherently complex, our team of few artists has found immense capabilities through this process.

- Stability: The entire process is marked by absolute stability, with zero instances of crashes.

- Speed: Animations exceeding 1000 frames are transferred to Unreal in less than a minute. Remarkably, the processor and GPU often remain unburdened during this swift process.

- Organization: I streamline my workflow by saving diverse animations in iClone’s Content Browser. This allows for quick retrieval and utilization in various scenes of the film, as virtually all necessary sequences are conveniently stored there.

- Practicality: Incorporating animations from various sources, including Noitom mocaps, Mixamo animations, and Unreal character animations, is a breeze. A simple drag-and-drop action from the Explorer to iClone seamlessly integrates these animations into the project.

As we near completion, the trailer provides a glimpse of our work, and we eagerly anticipate the release of the final short.

Learn More

- Follow Nick 2040 created by Cesar Turturro

- Animate 3D characters and cinematic scenes with iClone

- Create realistic characters with Character Creator